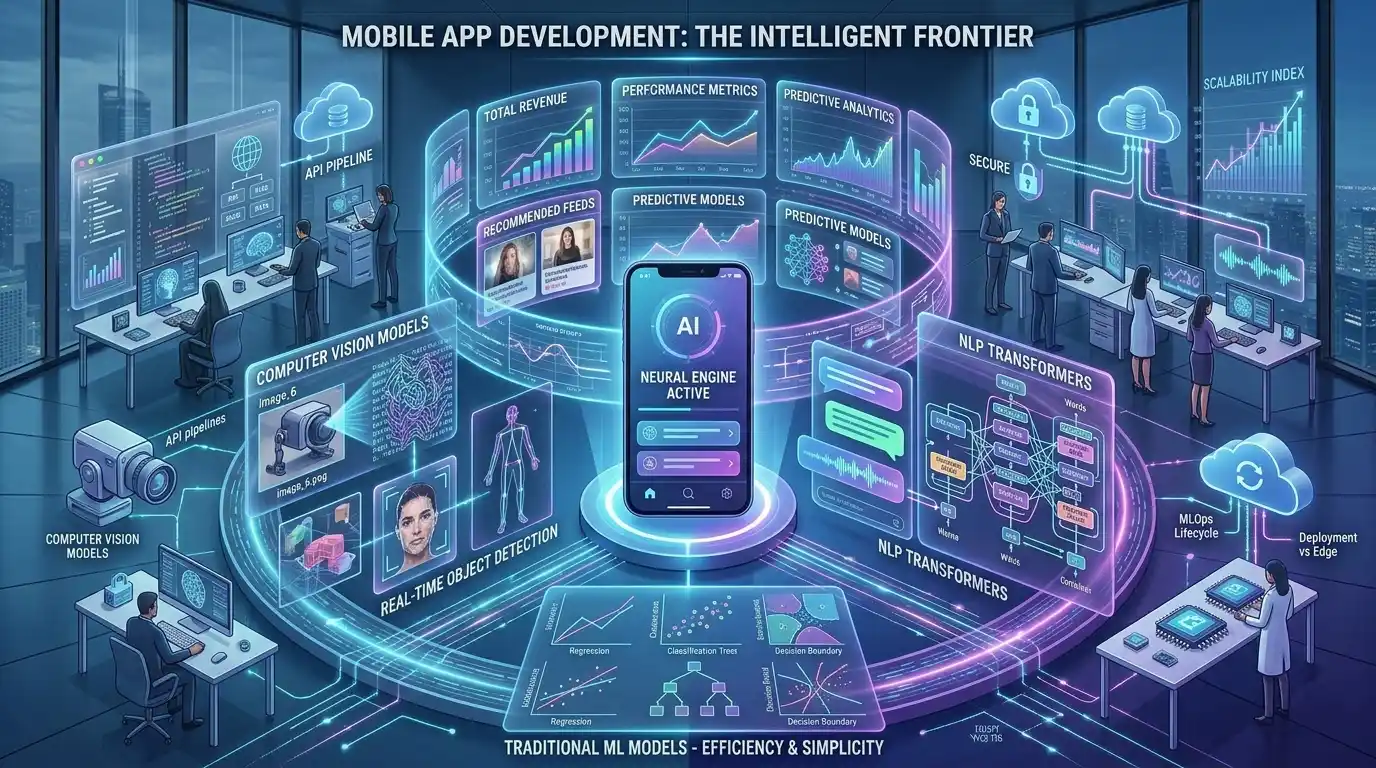

For modern tech founders, Chief Technology Officers (CTOs), and VPs of Product, launching a standard software application is no longer enough to secure market share. Users now expect hyper-personalized experiences, predictive analytics, and seamless automation features that can only be delivered through Artificial Intelligence.

However, transitioning from traditional software engineering to building AI apps introduces a radical shift in project management. Traditional apps rely on deterministic, rule-based logic (If A, then B). AI applications are probabilistic; they rely on massive datasets, trained machine learning models, and complex cloud inference infrastructures.

Understanding the complete lifecycle of AI applications from the initial Proof of Concept (PoC) to User Experience (UX) design, and finally to model deployment is the only way to prevent budget overruns and guarantee a successful product launch.

In this comprehensive playbook, we will walk you through the exact blueprint required to launch a successful AI app. If your enterprise is looking to bypass the costly trial-and-error phase entirely, partnering with an elite ai application development company like MindRind ensures your product is architected for maximum scalability, security, and market impact.

Chapter 1: The Ideation and Proof of Concept (PoC) Phase

The biggest mistake tech startups make is treating an AI app like a standard CRUD (Create, Read, Update, Delete) application. Before writing a single line of frontend code in React Native or Swift, you must validate your machine learning hypothesis.

Defining the AI Problem

Not every feature needs Artificial Intelligence. If a problem can be solved with a simple heuristic algorithm or a database query, use that. AI should only be applied to tasks involving pattern recognition, natural language processing, predictive forecasting, or computer vision. To understand exactly how your backend systems will change, developers must grasp the core differences between traditional vs AI-powered app development.

Building the Proof of Concept (PoC)

Unlike standard software, AI development starts with a PoC to prove that the selected algorithm can actually achieve the desired accuracy.

- Data Sourcing: The engineering team gathers a sample dataset.

- Model Selection: Will the app use a pre-trained Large Language Model (LLM), or will it require a custom classification algorithm? To make this decision, architects must evaluate the specific machine learning app development services and models available for their niche.

- Feasibility Check: Can the model process the data fast enough to provide a good user experience on a mobile device?

Once the PoC achieves the required accuracy baseline, the project moves into the Minimum Viable Product (MVP) phase.

Chapter 2: The Architectural Crossroads (Edge AI vs. Cloud Inference)

One of the most consequential decisions in the AI product development lifecycle is determining where the AI model will actually “think” (Inference). Will the processing happen on the user’s smartphone, or will it happen on a remote cloud server?

Cloud Inference (API-Driven Apps)

For applications relying on massive Large Language Models (like GPT-4 or Claude), the processing must happen in the cloud. The mobile app simply acts as a frontend interface that sends data via APIs to a backend server (like AWS SageMaker), where the heavy compute occurs.

- Pros: Access to virtually unlimited compute power; models can be updated seamlessly without pushing an app store update.

- Cons: Requires a constant internet connection; introduces network latency; ongoing cloud server costs can be extremely high.

Edge AI (On-Device Processing)

For applications that require real-time feedback with zero latency, the AI model must be compressed and deployed directly onto the user’s mobile device. This is done using frameworks like Apple’s CoreML for iOS or Google’s TensorFlow Lite for Android.

- Pros: Works entirely offline; zero cloud server costs; massive data privacy advantages because the user’s data never leaves their phone.

- Cons: Drains mobile battery life faster; older smartphones may lack the Neural Processing Units (NPUs) required to run the models smoothly.

Deciding between these two infrastructures is complex. Product managers must carefully evaluate Edge AI vs Cloud APIs to balance latency, privacy, and development costs.

Chapter 3: Industry-Specific Feature Engineering

The lifecycle of an AI app varies wildly depending on the industry it targets. AI features must be engineered to solve highly specific, niche pain points.

The HealthTech Sector

If you are developing a medical app, regulatory compliance (such as HIPAA in the US) dictates the entire software architecture. A healthcare app cannot process patient data on public cloud APIs. Instead, developers build highly secure, encrypted data pipelines to train predictive models capable of analyzing X-rays or summarizing patient records. For a complete breakdown of compliance and features, explore our guide on AI healthcare app development.

The Fitness and Wearables Sector

In the fitness sector, AI is moving far beyond simple step counters. Today’s top apps utilize local Edge AI and Computer Vision. By accessing the user’s smartphone camera, the app runs real-time pose estimation algorithms to analyze the user’s workout form, count reps, and provide instant audio feedback. Integrating these camera sensors and wearable APIs (like Apple HealthKit) requires specialized knowledge of AI fitness app development services.

Chapter 4: UX/UI Design for AI Applications

Even if your underlying machine learning model is a masterpiece of mathematics, a poor User Experience (UX) will cause your app to fail immediately upon launch.

Designing an AI app requires a completely different UX/UI paradigm. Users are accustomed to apps loading screens and returning data in milliseconds. However, generative AI models and complex image recognition algorithms often take several seconds to generate a response

Masking AI Latency

If an app screen freezes for 5 seconds while a cloud API processes an image, the user will assume the app has crashed and delete it. Design teams must implement:

- Skeleton Screens and Shimmer Effects: To indicate that the system is actively working.

- Streaming Outputs: For generative text, the UI should stream the text word-by-word (like ChatGPT) rather than waiting for the entire block of text to load.

- Conversational Interfaces: Moving away from traditional buttons and menus toward natural language text and voice inputs.

Furthermore, AI occasionally makes mistakes. The UI must include “Human-in-the-Loop” (HITL) mechanisms, allowing the user to easily edit, regenerate, or provide thumbs-up/thumbs-down feedback on the AI’s output. To master this new design language, your product team must review the best practices for UX/UI design in generative AI applications.

Chapter 5: The Build Phase (Custom vs White-Label Solutions)

Once the architecture is decided and the UX is mapped out, the actual coding begins. For enterprise leaders, this phase introduces a critical business decision regarding intellectual property and source code ownership.

White-Label SaaS Solutions

Many companies try to bypass the development lifecycle by purchasing “White-Label” AI solutions. These are pre-built, generic AI applications where a vendor slaps your company’s logo on their software.

- The Problem: You do not own the underlying source code. You are locked into the vendor’s ecosystem. If your business scales or you need to integrate a highly specific machine learning feature, the white-label software will bottleneck your growth.

Custom AI Application Development

To build true enterprise value and secure intellectual property, tech founders must invest in custom engineering. A custom build ensures that the AI models are trained exclusively on your proprietary data, the app architecture is infinitely scalable, and you maintain 100% ownership of the final codebase.

If your enterprise is debating the long-term ROI of these two approaches, we highly recommend reading our comparative analysis on custom AI application development vs white-label solutions to make an informed financial decision.

Chapter 6: Partnering with an Elite App Development Agency

Building a custom AI application requires a multidisciplinary squad: Mobile Developers (Swift/Kotlin), Backend Engineers, Data Scientists, and UI/UX Designers. Building this entire team in-house from scratch can take over 8 months and cost millions of dollars in payroll.

To achieve immediate speed-to-market, the vast majority of successful tech startups and enterprises partner with specialized agencies. But how does that process actually work?

The Agency Workflow

When you partner with an elite agency, you are not just buying code; you are buying a streamlined, agile operation.

- The Discovery Phase: The agency maps out the tech stack, API requirements, and compliance guardrails.

- Wireframing & PoC: They deliver interactive UI wireframes alongside the mathematical Proof of Concept for the ML models.

- Agile Sprints: The app is built in 2-week iterations, allowing you to test features continuously before the final launch.

To ensure you know exactly how to manage this relationship and what deliverables to demand, review our complete guide on what to expect when hiring an artificial intelligence app development agency.

Why the USA Market is the Gold Standard

While offshore development is common, top-tier tech startups consistently seek out agencies based in or operating under United States standards. This ensures strict adherence to Intellectual Property (IP) laws, NDA enforcement, and timezone alignment for critical agile sprint meetings. If you are a founder looking to secure your product’s future, understanding why startups partner with AI app development companies in the USA will guide your outsourcing strategy.

Chapter 7: Launch, MLOps, and App Monetization

The launch of an AI application is not the finish line; it is the starting line. Once your app hits the iOS App Store or Google Play Store, the operational phase (MLOps) begins.

Unlike traditional apps, AI models suffer from “Data Drift.” As users interact with your app and input new types of data, the model’s accuracy can degrade. The engineering team must continuously monitor the cloud APIs, retrain the models with fresh data, and push seamless over-the-air (OTA) updates to maintain performance.

Monetizing Your AI Product

Finally, the app must generate revenue. Because generative AI and cloud inference APIs are expensive to run, traditional app monetization models (like one-time purchases or cheap ad-supported tiers) often result in financial losses.

Founders must implement smart monetization strategies, such as Freemium B2B SaaS models, tiered subscription layers, or strict token/API usage limits for free users. To ensure your app is highly profitable from day one, explore our playbook on how to monetize AI application development solutions.

Launch Your AI App with MindRind

The lifecycle of an AI application is fraught with architectural complexities, compliance hurdles, and deep mathematical engineering. You cannot afford to launch a buggy, slow, or hallucinatory app into today’s hyper-competitive market.

At MindRind, we are a premium ai application development company . We take your vision from raw concept to a fully deployed, highly scalable mobile or web application. Our elite team handles the complete lifecycle—from securing HIPAA-compliant cloud architectures and edge-AI CoreML deployments, to crafting stunning, latency-masking UI designs.

Don’t let your competitors beat you to the App Store. Contact MindRind today, and let’s start building your custom AI application.

Frequently Asked Questions

Traditional app development relies on static, rule-based coding (if/then logic) connected to standard databases. AI app development integrates machine learning models, neural networks, and probabilistic logic. This requires complex data pipelines, specialized cloud inference (or edge computing), and a UX design that accommodates AI processing times.

A PoC is the first mandatory step in the AI lifecycle. Before building the app’s frontend, data scientists test a sample dataset against various machine learning algorithms to mathematically prove that the AI can solve the specific problem with a high degree of accuracy.

It depends on your use case. Cloud APIs (like AWS SageMaker or OpenAI) are best for applications requiring massive reasoning power but require an internet connection and incur ongoing server costs. Edge AI (running models directly on the user’s phone via CoreML or TFLite) is best for offline use, zero latency (like fitness camera tracking), and strict data privacy.

AI models take time to generate answers. To prevent high uninstall rates, UI/UX designers must use “Latency Masking” techniques. This includes using skeleton loading screens, engaging animations, or streaming text outputs (word-by-word) so the user feels the app is highly responsive even while the AI is “thinking.”

Machine Learning Operations (MLOps) is the ongoing maintenance phase after the app launches. AI models degrade in accuracy over time as they encounter new, unseen user data (Data Drift). MLOps involves monitoring the model’s performance in the real world and continuously retraining and updating it to ensure the app remains accurate.

White-label apps are pre-built and generic. You do not own the source code, which limits your company’s valuation and intellectual property. Furthermore, white-label solutions cannot be deeply customized to fit complex enterprise workflows or unique machine learning requirements. Custom development gives you 100% ownership and infinite scalability.

Running cloud AI is expensive. Apps that offer “free” AI features usually implement strict usage limits (e.g., “5 free AI generations per day”). Once the limit is hit, users are prompted to upgrade to a premium SaaS subscription. This Freemium model ensures the API costs of free users do not exceed the revenue generated by paid users.

The timeline varies wildly based on complexity. A basic AI-wrapper app using public APIs can be built in 2 to 3 months. However, a highly secure, enterprise-grade mobile app featuring custom-trained machine learning models, robust UX design, and cloud infrastructure typically requires a 6 to 9-month development lifecycle.