The digital fitness industry has undergone a massive evolution. Five years ago, launching a successful fitness tracking app simply required utilizing the smartphone’s accelerometer to count steps or integrating basic GPS APIs to track running routes. Today, that baseline functionality is considered obsolete.

Modern consumers expect their smartphones to act as hyper-intelligent personal trainers. They want apps that can watch them through the camera, analyze their biomechanics in real-time, correct their squat form, and dynamically adjust their workout volume based on biometric fatigue data.

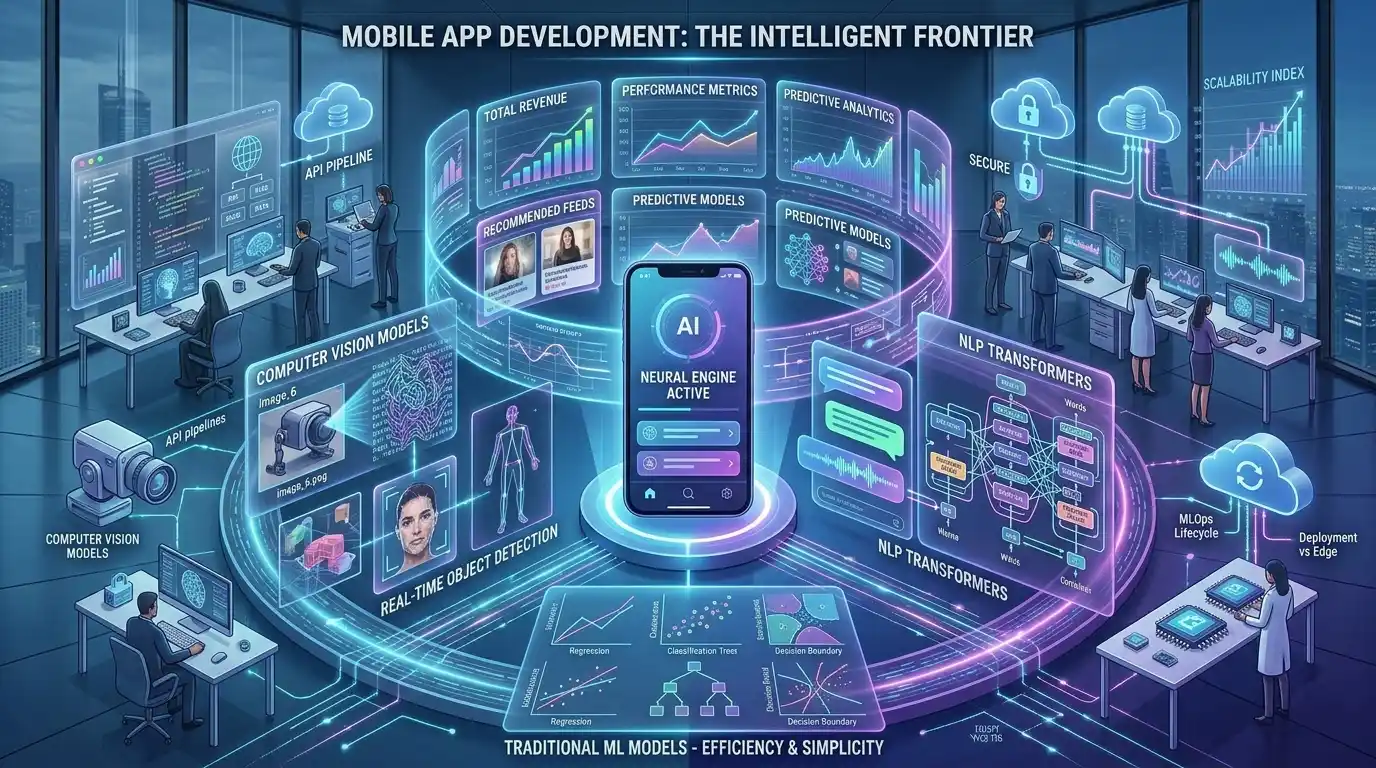

Building this level of intelligence requires a profound shift in software engineering. You are no longer just building user interfaces; you are integrating complex Computer Vision algorithms, deploying neural networks onto mobile devices, and syncing real-time data from Wearable APIs.

Architecting these smart gym apps is incredibly complex, which is why top tech founders seek out specialized ai fitness app development services (<- Focus Keyword). In this deep-dive technical guide, we will break down the exact machine learning frameworks and infrastructure required to build a next-generation AI workout app. To understand how this fits into the broader roadmap of your startup, review our master guide on the full lifecycle of AI product development.

If your team is ready to build, MindRind is a premier ai app development service that specializes in integrating these heavy mathematical models seamlessly into iOS and Android ecosystems.

Chapter 1: The Core Tech Computer Vision and Pose Estimation

If you want your mobile application to correct a user’s workout posture, the app must first be able to “see” and understand the human body. This is achieved through a subfield of Artificial Intelligence known as Computer Vision, specifically an algorithm called Pose Estimation.

How Pose Estimation Works

Pose estimation does not rely on simple object detection (which just identifies that a human is in the frame). Instead, it uses deep learning neural networks to map the human skeletal structure in 2D or 3D space.

Frameworks like Google’s MediaPipe or Apple’s Vision Framework identify specific “landmarks” or “keypoints” on the user’s body. For example, a standard pose estimation model tracks 33 distinct 3D landmarks (e.g., left shoulder, right elbow, left hip, right knee).

The Engineering Workflow for Form Correction

- Video Capture: The mobile app captures a live video feed from the smartphone’s front-facing camera at 30 to 60 Frames Per Second (FPS).

- Landmark Extraction: The neural network processes each frame, mapping the X, Y, and Z coordinates of the 33 skeletal keypoints.

- Heuristic Logic & Angle Calculation: The backend engineers write specific vector math logic to calculate the angles between these keypoints.

- Example: To evaluate a bicep curl, the app calculates the angle formed by the shoulder, elbow, and wrist keypoints. If the angle does not drop below 30 degrees, the app triggers real-time feedback (via audio or UI overlay) telling the user to “Bend your elbow fully.”

Chapter 2: The Latency Challenge (Why Edge AI is Mandatory)

The most critical engineering constraint when building a Computer Vision fitness app is latency.

When a user is mid-squat with 200 pounds on their back, they need instant feedback. If the app sends the live video feed over a cellular network to a cloud server for processing, the round-trip network delay could be 2 to 3 seconds. By the time the cloud server sends the “Keep your back straight” warning back to the phone, the user has already finished the rep and potentially injured themselves.

Processing at 30 Frames Per Second

To achieve true real-time feedback, the pose estimation model must run at a minimum of 30 FPS directly on the user’s smartphone. This means you must eliminate cloud dependency entirely.

To do this, data scientists must compress the neural network models and deploy them locally using Edge AI frameworks (like CoreML for iOS or TensorFlow Lite for Android). This allows the model to utilize the smartphone’s native Neural Processing Unit (NPU). If you do not optimize the on-device latency for cameras, the app will lag, drop frames, and cause severe battery overheating, leading to immediate app uninstalls.

Chapter 3: Generating Smart Workouts (Recommendation Engines)

While Computer Vision acts as the “eyes” of your AI workout app, the backend logic acts as the “brain.”

A standard fitness app provides static, pre-written 12-week workout PDF plans. An AI-powered fitness app uses predictive analytics to generate dynamic workouts that change daily based on the user’s performance, fatigue levels, and past recovery metrics.

Building the ML Recommendation Engine

To achieve this, developers must build Collaborative Filtering or Reinforcement Learning models. These models ingest historical data (how many reps the user completed yesterday, how much weight they lifted, how much sleep they got) and output the optimal routine for today.

Designing these systems requires a deep understanding of data science. Your engineering team must carefully select the right ML algorithms for recommendations. If the algorithm is too aggressive, the app will recommend weights that are too heavy, risking user injury. If it is too conservative, the user will not see progress and will abandon the app.

Chapter 4: Integrating Wearable Tech (HealthKit and Google Fit APIs)

A truly intelligent AI fitness app does not just rely on what it sees through the camera; it relies on invisible biometric data. To provide hyper-personalized workout recommendations, the app must continuously monitor the user’s Heart Rate Variability (HRV), resting heart rate, sleep cycles, and blood oxygen levels (SpO2).

This data is collected by wearables like the Apple Watch, Garmin, Oura Ring, or WHOOP straps. To access this data, your engineering team must integrate complex, cross-platform health APIs.

The Apple HealthKit and Google Fit Integrations

Connecting to these APIs is not as simple as making a standard REST API call. Health data is heavily protected by mobile operating systems due to severe privacy regulations.

- Permissions and Encryption: Your app must request granular, explicit permission for every single data type (e.g., you must ask separately for “Sleep Data” and “Heart Rate Data”). Furthermore, the data retrieved from HealthKit is encrypted and stored locally.

- Background Syncing: Biometric data must be synced asynchronously in the background. If your AI model needs the user’s sleep data from last night to generate today’s workout, the backend must process that data before the user even opens the app in the morning.

By combining the camera’s pose estimation data with real-time wearable biometrics, your AI app can calculate “Muscle Strain” and “Cardio Fatigue,” ensuring the user trains at the absolute optimal threshold without overtraining.

Chapter 5: Data Privacy and Compliance in Fitness Apps

When you build an app that accesses a user’s camera to record them squatting in their living room, while simultaneously reading their heart rate from their smartwatch, you are handling highly sensitive personal data.

If this data is mishandled, your startup will face massive legal repercussions under frameworks like the California Consumer Privacy Act (CCPA) or Europe’s General Data Protection Regulation (GDPR).

Privacy-First AI Architecture

To mitigate legal risks, elite engineering teams design “Privacy-First” architectures.

- Because the pose estimation models run via Edge AI (directly on the device), the video feed never needs to be transmitted to the cloud. The smartphone processes the video frames, calculates the skeletal angles, and immediately deletes the video data.

- The only data sent to your backend server is the metadata (e.g., “The user completed 15 reps of squats with 90% form accuracy”). This ensures that even if your cloud server is compromised by hackers, no video footage of the users can be leaked.

Chapter 6: The Economics of Fitness App Development

Building a Computer Vision application that integrates with wearable tech is an expensive undertaking. The Capital Expenditure (CapEx) required to hire MLOps engineers, 3D computer vision specialists, and native iOS/Android developers is substantial.

To ensure the product is financially viable, tech founders must have a rigorous business model before writing a single line of code. Generative AI and advanced ML features are expensive to maintain. If you offer a completely free app, the ongoing cloud inference costs for the recommendation engines will destroy your margins.

You must design a sustainable subscription strategy, utilizing paywalls for advanced biometric analytics or AI coaching. To understand the exact unit economics of the SaaS fitness model, founders must study how to monetize AI application development solutions effectively.

Architect Your Next-Gen Fitness App with MindRind

The transition from a basic workout log to an intelligent, camera-driven AI personal trainer requires a profound level of mathematical and software engineering. It is not a project that a standard web development agency can handle.

At MindRind, our core expertise lies in ai app development solutions that push the boundaries of what smartphones can do. We are experts in Computer Vision, MediaPipe, CoreML integration, and HealthKit data syncing. We partner with fitness founders to architect applications that track form with zero latency, protect user privacy, and scale to millions of users globally.

Stop building basic apps. Contact MindRind today to integrate real-time Computer Vision and Wearable AI into your fitness platform.

Frequently Asked Questions

Pose estimation is a Computer Vision technology that uses deep learning to identify and track specific points on a human body (like joints and limbs) in a video feed. In fitness apps, it tracks these skeletal “landmarks” to analyze a user’s form, count repetitions, and correct posture in real-time.

Yes, but only if the app is built using Edge AI. By compressing the Computer Vision model and running it directly on the smartphone’s local processor (using Apple CoreML or Google MLKit), the app can track your workout form with zero latency, entirely offline.

Lag or “dropped frames” occur when the machine learning model is not properly optimized for the mobile device. If the developer uses a model that is too heavy, the smartphone’s processor cannot calculate the skeletal angles fast enough (it must hit at least 30 Frames Per Second for smooth tracking), causing the app to freeze.

Developers use the Apple HealthKit API (or Google Fit API for Android) to connect the mobile app to wearable devices. The app requests encrypted, explicit permission from the user to read biometric data—such as resting heart rate, sleep cycles, and active calories—which the AI then uses to personalize workout recommendations.

They can be, if built poorly. A secure, privacy-first AI fitness app processes the video feed locally on the phone (Edge AI). It calculates the workout data and instantly deletes the video frames. The video is never uploaded to a cloud server, ensuring the user’s privacy is fully protected.

Instead of giving every user the same 12-week static workout plan, a Recommendation Engine is a Machine Learning algorithm that dynamically generates daily workouts. It adjusts the weight, sets, and reps based on the user’s past performance, fatigue levels, and biometric recovery data.

Yes, building a custom Computer Vision app requires specialized Machine Learning Engineers, native mobile developers, and rigorous QA testing. The development cost is significantly higher than a standard database-driven app, but the Return on Investment (ROI) is massive due to the high retention rates of premium fitness SaaS subscriptions.

To build the Computer Vision layer, developers typically use Google’s MediaPipe, Apple’s Vision Framework, or YOLO (You Only Look Once) algorithms. For the mobile frontend, they use Swift (iOS), Kotlin (Android), or cross-platform frameworks like Flutter or React Native, heavily integrated with native C++ bindings for performance.