For Chief Technology Officers (CTOs) and enterprise recruitment teams, the mandate to integrate Artificial Intelligence into the company’s core operations is clear. However, executing that mandate reveals a severe bottleneck: the global talent shortage.

There is a fundamental misconception in the tech industry that a senior full-stack web developer (proficient in React, Node.js, and SQL) can easily transition into building enterprise-grade AI systems over a weekend. While a standard backend developer can certainly learn how to make a basic REST API call to OpenAI, architecting a secure, scalable, hallucination-free AI backend requires a completely different paradigm of computer science.

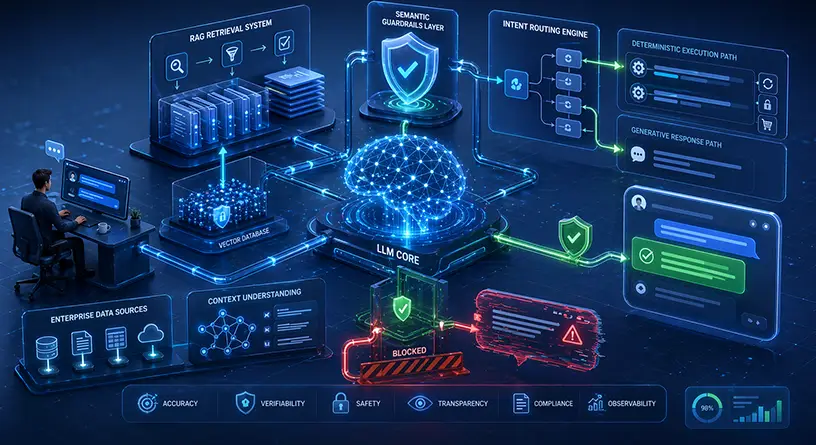

Generative AI operates on probability, advanced vector calculus, and deep neural networks, whereas traditional software operates on deterministic, rule-based logic. To build production-ready systems, you must hire a specialized generative ai developer.

In this comprehensive technical hiring guide, we will break down the exact technology stack, mathematical foundations, and MLOps proficiencies you must test for during the interview process. If you are building out your broader tech roadmap, understanding this required generative ai software development expertise is the first critical step.

For enterprises that cannot wait 6 months to recruit this scarce talent, partnering directly with a premium generative ai development company like MindRind provides immediate access to fully formed, elite engineering squads.

Chapter 1: The Foundation Mathematics and Deep Learning Frameworks

You cannot build a scalable AI system if the developer does not understand what is happening inside the “black box.” A true generative AI engineer must possess a strong foundational understanding of Linear Algebra, Calculus, and Probability Theory.

When interviewing candidates, bypass standard algorithms and data structures (like reversing a linked list) and test their proficiency in the core Deep Learning frameworks.

Python and PyTorch / TensorFlow

Python is the undisputed language of Artificial Intelligence. However, knowing Python syntax is not enough. The developer must be an expert in deep learning libraries, specifically PyTorch (developed by Meta) or TensorFlow (developed by Google).

- What to look for: Ask the candidate how they manage tensors (multi-dimensional arrays) and how they handle CUDA out-of-memory (OOM) errors when moving large datasets across NVIDIA GPUs. If they do not understand GPU memory allocation, they cannot deploy models in production.

Transformer Architectures

Generative AI is powered by the Transformer architecture (introduced in the famous “Attention Is All You Need” paper). A qualified developer must understand how “Self-Attention” mechanisms work, how models assign weights to different tokens, and the mathematical limitations of a model’s Context Window.

Chapter 2: Architecting Data (RAG and Vector Databases)

Out-of-the-box foundation models know nothing about your specific B2B enterprise. To make an AI useful, the developer must know how to securely connect the Large Language Model (LLM) to your proprietary databases. In 2026, the industry standard for this is Retrieval-Augmented Generation (RAG).

Advanced RAG Implementation

Anyone can follow a 10-minute tutorial to build a basic RAG script, but building it for an enterprise with millions of documents requires deep data engineering.

- What to look for: You must test the candidate’s RAG implementation skills . Ask them about “Semantic Chunking Strategies.” If they blindly chunk documents by character count rather than by logical paragraphs or semantic boundaries, the AI will retrieve broken context and hallucinate.

Mastery of Vector Databases

Traditional SQL (PostgreSQL) and NoSQL (MongoDB) databases cannot natively handle AI data. Generative AI developers must be proficient in working with Enterprise Vector Databases such as Pinecone, Milvus, Weaviate, or Qdrant.

- What to look for: The developer must understand how to generate “Embeddings” (converting text into arrays of numbers) and how to optimize “Cosine Similarity Search” to retrieve data in milliseconds without crashing the server.

Chapter 3: Fine-Tuning and Open-Source Operations

If your enterprise operates in a highly regulated industry (like healthcare or finance), you cannot send your proprietary data to public APIs like ChatGPT. You will need to host your own models within a secure Virtual Private Cloud (VPC).

This requires a highly specialized subset of skills known as Model Fine-Tuning.

PEFT, LoRA, and Quantization

Training a model from scratch costs millions of dollars. Instead, modern AI developers use Parameter-Efficient Fine-Tuning (PEFT) techniques to teach an existing open-source model (like Meta’s Llama 3) how to behave for a specific enterprise task.

- What to look for: Test the candidate on their open source model tuning expertise. Ask them to explain LoRA (Low-Rank Adaptation) and QLoRA.

- The MLOps Test: Ask them how they would deploy a 70-Billion parameter model on a server that only has 80GB of VRAM. If they do not mention “Quantization” (compressing the model’s weights from 16-bit to 4-bit integers), they do not have production-level open-source experience.

Chapter 4: LLM Orchestration (LangChain and LlamaIndex)

A common mistake made by junior developers is writing raw Python scripts that make direct API calls to an LLM. While this works for a simple chatbot demo, it collapses entirely in a production environment. To build enterprise-grade applications, the developer must be highly proficient in LLM Orchestration frameworks.

Mastery of LangChain

LangChain is the industry-standard framework for building complex AI applications. It acts as the connective tissue between the LLM, your APIs, your memory systems, and your user interface.

- What to look for: A qualified developer should be able to explain how to build “Chains” (linking multiple LLM prompts together where the output of one becomes the input of the next) and “Autonomous Agents.” They must know how to equip an LLM with external tools, such as web search capabilities or SQL database access.

Data Pipelines with LlamaIndex

While LangChain handles the routing, LlamaIndex is the premier framework for connecting large amounts of private data to LLMs.

- What to look for: Your candidate must understand how to ingest data continuously. If a user updates a PDF in your SaaS platform, the developer must know how to trigger an asynchronous LlamaIndex pipeline that immediately updates the vector database without freezing the main application.

Chapter 5: Building Autonomous Agentic Workflows

In 2026, the focus has shifted from reactive chatbots to proactive “Autonomous Agents.” An agent is given a high-level goal (e.g., “Review this Pull Request for security vulnerabilities”), and it autonomously uses tools to complete the task.

If you are hiring developers to augment your internal engineering squad, they must understand how to build these agents and integrate them directly into your CI/CD pipelines.

- What to look for: Ask the candidate how they would sand-box an AI agent so it can safely execute code without accessing your production servers. If your enterprise is looking to implement these advanced software tools , your developer must understand containerization (Docker), terminal execution, and Git webhook integrations.

Chapter 6: MLOps and Production Security

Building the AI is only 20% of the job. Maintaining it in production (MLOps) is the other 80%. Generative AI models suffer from “Data Drift” (their outputs degrade over time) and are highly vulnerable to “Prompt Injection” attacks.

- Prompt Injection Defense: A hacker can trick an LLM into revealing sensitive system prompts by hiding instructions in a text file. The developer you hire must know how to deploy Semantic Guardrails (like LlamaGuard or NeMo) to scan inputs and outputs for malicious intent.

- Token Optimization: Ask the candidate how they optimize API costs. If they do not mention “Semantic Caching” (saving the answers to frequently asked questions to bypass the LLM entirely) or dynamic context window compression, they will end up costing your company thousands of dollars in wasted cloud bills.

Chapter 7: The Economics of Talent-In-House vs. Agency

After reviewing this technical checklist, many CTOs realize that finding a single developer who possesses deep knowledge of PyTorch, Vector Calculus, LangChain, and Enterprise Security is nearly impossible. These individuals are “unicorn” engineers, and their salaries reflect it.

In 2026, the base salary for a Senior Machine Learning Engineer routinely exceeds $200,000 to $250,000 annually. Furthermore, a single engineer cannot build an entire enterprise system alone; you need an architect, a data engineer, and an MLOps specialist. Building an internal team can easily incur over $1.5 Million in annual payroll liabilities.

Because of this severe talent bottleneck and massive capital expenditure, the smartest tech leaders are bypassing the recruitment phase entirely. By evaluating the strategic differences between Outsourcing vs Hiring , enterprises discover that partnering with an elite AI agency provides an entire squad of vetted experts on day one, at a fraction of the cost of an internal team.

Bypass the Talent Shortage with MindRind

Recruiting, interviewing, and onboarding elite generative AI developers can stall your company’s product roadmap for over 6 to 8 months. In the fast-paced AI arms race, an 8-month delay means surrendering your market share to competitors.

You do not need to fight the talent shortage. MindRind is a premier team of elite machine learning engineers, data scientists, and security architects. We already possess the deep expertise in PyTorch, Vector Databases, LangChain orchestration, and secure VPC deployments required to build enterprise-grade AI.

Stop hunting for unicorns and start building. Contact MindRind today to instantly deploy a world-class generative AI engineering team to your enterprise project.

Frequently Asked Questions

Python is the undisputed primary language for generative AI, as the vast majority of machine learning frameworks (PyTorch, TensorFlow) and orchestration tools (LangChain, LlamaIndex) are written in it. Secondary languages include JavaScript/TypeScript (for frontend integration) and Go or Rust (for highly scalable, low-latency API gateways).

Traditional developers write deterministic, rule-based code (If A, then B) to build websites and databases. AI developers build probabilistic systems. They work with neural networks, multi-dimensional vector mathematics, model training, and prompting, requiring a strong foundation in calculus and linear algebra.

LangChain is an orchestration framework that allows developers to link Large Language Models (LLMs) to external tools (like search engines, APIs, and databases). It is a mandatory skill because raw LLMs cannot interact with the outside world; LangChain provides the “hands and eyes” for the AI agent.

To give an AI access to a company’s private documents without retraining the entire model, developers use Retrieval-Augmented Generation (RAG). RAG requires converting text into numbers (embeddings) and storing them in a Vector Database (like Pinecone). The developer must know how to manage and query these databases efficiently.

PEFT is an advanced machine learning technique. Instead of requiring massive supercomputers to train an entire LLM from scratch, PEFT (using methods like LoRA) allows a developer to freeze the model and only train a very small, new set of parameters. This drastically lowers the cost and hardware required to customize an AI.

MLOps (Machine Learning Operations) is the practice of managing AI models in a live production environment. An AI developer must know MLOps to monitor the model for accuracy degradation (drift), handle continuous data updates, and scale the cloud GPU infrastructure automatically during traffic spikes.

Ask them how they protect the system against “Prompt Injection” attacks and data leaks. A qualified developer should explain how to deploy Dual-Model Semantic Guardrails, enforce Role-Based Access Control (RBAC) in vector databases, and utilize enterprise API endpoints with zero data retention policies.

Building a production-ready enterprise AI system requires multiple disciplines (Data Engineering, MLOps, AI Architecture, Backend integration). A single developer cannot do it all efficiently. Hiring an elite AI agency provides an entire, multi-disciplinary team immediately, saving months of recruitment time and reducing overall payroll costs.