For Chief Technology Officers (CTOs) and Enterprise Architects, the generative AI landscape has shifted dramatically over the past 24 months. In the early days of the AI boom, building an enterprise AI application meant one thing: wrapping a user interface around OpenAI’s API. There was simply no viable alternative to GPT-4’s reasoning capabilities.

Today, that monopoly has been broken. The open-source community, backed by tech giants like Meta and Mistral, has released incredibly powerful, commercially viable Large Language Models (LLMs) that rival and in some specific use cases, beat the leading proprietary models.

This presents enterprise leaders with a critical, foundational crossroad. Should your organization rely on proprietary API endpoints (like GPT-4 and Claude 3.5), or should you download the weights of an open-source model (like Llama 3), host it on your own hardware, and take complete control of your AI destiny?

This is not just a technical choice; it dictates your cloud computing budget, your data security posture, and your vulnerability to vendor lock-in. In this deep-dive architectural comparison, we will evaluate both approaches. To see how this decision fits into your broader company roadmap, we recommend reading our foundational generative AI software strategy guide.

If your organization is ready to build, partnering with a top-tier generative ai development firm like MindRind ensures you choose the right model for your specific enterprise constraints.

Chapter 1: The Proprietary API Route (GPT-4, Claude, Gemini)

Proprietary foundation models are developed by companies like OpenAI, Anthropic, and Google. Their source code, training data, and model weights are strictly hidden. As an enterprise developer, you interact with these models purely through REST APIs.

The Advantages of Proprietary LLMs

For many B2B SaaS companies, proprietary models are the default starting point, and for good reason:

- Massive Parameter Size & Reasoning: Models like GPT-4o are rumored to have over a trillion parameters (using a Mixture of Experts architecture). They are unparalleled in complex, generalized reasoning, advanced mathematics, and multi-lingual translation.

- Speed to Market: You do not need to hire MLOps engineers or provision expensive GPU clusters. You simply generate an API key, write a few lines of Python or Node.js, and your software instantly has world-class AI capabilities.

- Out-of-the-Box Multi-Modality: Proprietary models excel at seamlessly transitioning between text, audio, and vision processing without requiring you to stitch multiple different models together.

The Risks: Vendor Lock-In and Black Box Architecture

However, relying solely on proprietary APIs at an enterprise scale introduces severe strategic vulnerabilities:

- Vendor Lock-In: Your entire software application’s core functionality becomes dependent on a single third-party provider. If OpenAI changes its pricing model, deprecates a specific model version, or experiences a server outage, your business suffers immediately.

- The Black Box Problem: You have zero visibility into how the model makes decisions. If the model starts hallucinating or its behavior “drifts” after an invisible backend update by the provider, your engineering team cannot inspect the weights or fix the underlying issue.

Chapter 2: The Open-Source Revolution (Llama 3, Mistral, Qwen)

The term “open-source” in AI can be slightly misleading. While the training data is rarely public, companies like Meta release the Model Weights to the public via platforms like Hugging Face. This means you can download the model, run it locally, modify its neural pathways, and integrate it deeply into your infrastructure.

The Advantages of Open-Source LLMs

Deploying open-source models (like Meta’s Llama 3 70B or Mistral’s Mixtral 8x7B) has become the gold standard for mature enterprise AI for several compelling reasons:

- Absolute Data Sovereignty: This is the biggest driving factor for adoption. With open-source models, you can deploy the AI entirely within your own Virtual Private Cloud (VPC) or even on bare-metal, on-premise servers. Your proprietary enterprise data never crosses the public internet. For a deeper understanding of why this is critical for SOC 2 and HIPAA, read our guide on open-source security and preventing data leaks.

- Customization via Fine-Tuning: Proprietary APIs allow for basic fine-tuning, but you are still just tweaking a black box. With an open-source model, you have full access to the weights. You can use advanced techniques like LoRA (Low-Rank Adaptation) to deeply embed your company’s proprietary coding language or brand voice into the model itself. To understand the mechanics of this, explore our breakdown of fine-tuning open source models versus RAG architectures.

- Eliminating Vendor Lock-in: You own the infrastructure. Nobody can suddenly deprecate the model you are using, and you are not at the mercy of unpredictable API rate limits.

The Engineering Challenges

Hosting your own open-source model is not free, nor is it simple.

- Hardware Bottlenecks: Running a large open-source model requires specialized GPUs (like NVIDIA A100s or H100s). Securing cloud GPU instances is difficult due to global supply shortages, and renting them is expensive.

- MLOps Complexity: You cannot just “turn on” an LLM. You need a highly specialized team to handle containerization, tensor parallelism (splitting the model across multiple GPUs), load balancing, and quantization (compressing the model to run faster).

Chapter 3: Comparing the Total Cost of Ownership (TCO)

One of the biggest misconceptions in enterprise AI is that “open-source means free.” While you do not pay a licensing fee to Meta to use Llama 3 (up to 700 million monthly active users), the infrastructure required to run it is a massive capital expenditure.

To make an informed decision, CTOs must evaluate the Total Cost of Ownership (TCO) across both paradigms.

The Cost of Proprietary APIs (OpEx Heavy)

With proprietary models like GPT-4, your costs are strictly Operational Expenditure (OpEx). You pay based on “Tokens” (parts of words) sent to the API and generated by the API.

- The Pro: Zero upfront infrastructure costs. You only pay for exactly what you use.

- The Con: At enterprise scale, token costs scale linearly and aggressively. If you build an internal RAG (Retrieval-Augmented Generation) application where 5,000 employees are searching massive company documents every day, you are sending millions of tokens per hour. Your API bill can easily exceed $50,000 a month, destroying the ROI of the software.

The Cost of Open-Source Hosting (CapEx Heavy)

With open-source models, you are paying for the cloud compute (GPUs) to host the model, regardless of how many tokens you generate.

- The Pro: The cost is flat. Whether your employees send 1,000 prompts or 100,000 prompts a day, the server cost remains exactly the same. At a high enough volume, the cost-per-token of an open-source model drops to fractions of a penny, making it vastly cheaper than OpenAI.

- The Con: Renting a cluster of NVIDIA H100 GPUs on AWS or Azure costs thousands of dollars a month just to keep the server idling.

Before deciding on your architecture, your engineering team must run a strict break-even analysis. We break down the exact math for this in our guide on calculating your API vs Hosting costs for custom generative AI.

Chapter 4: The Talent Gap and Implementation Strategy

You cannot deploy an open-source LLM with a team of standard front-end web developers.

Interacting with an API like Anthropic’s Claude requires basic JSON formatting. Conversely, deploying an open-source model requires deep knowledge of PyTorch, vLLM (Versatile Large Language Model engine), CUDA memory management, and model quantization (converting 16-bit float weights to 4-bit integers so the model can fit on cheaper hardware).

If your enterprise decides to take the open-source route to secure your data, you must understand the specific skills needed for Llama and other open-source deployments before building your internal engineering team.

Chapter 5: The 2026 Enterprise Solution: The Hybrid Multi-Model Strategy

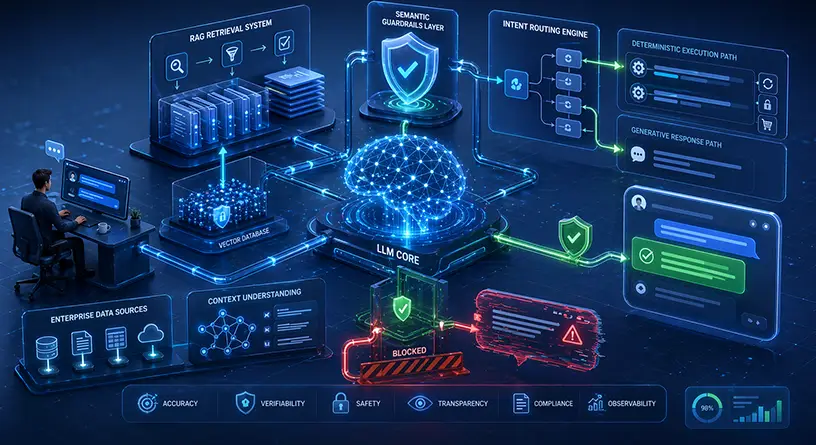

The most sophisticated tech companies in 2026 are not choosing just one path. They are adopting a Hybrid, Multi-Model Routing Strategy.

Instead of routing every single prompt to a massive, expensive model, intelligent API Gateways analyze the complexity of the user’s request:

- Simple/Internal Tasks: If a user asks for a simple summary of a document, or if the backend needs to quickly parse a JSON file, the gateway routes the request to a fast, locally hosted open-source model (like Llama 3 8B) running inside your secure VPC. This costs almost nothing and ensures data privacy.

- Complex Reasoning Tasks: If a user asks a highly complex mathematical question or requires advanced code generation, the gateway dynamically routes that specific prompt to a proprietary API like GPT-4o.

This hybrid approach guarantees maximum data security for sensitive tasks while maintaining access to state-of-the-art reasoning capabilities, all while keeping cloud compute costs at an absolute minimum.

Architect Your AI Future with MindRind

Deciding between open-source models and proprietary APIs is an architectural decision that will impact your company for years. A wrong choice leads to vendor lock-in, data leaks, or unmanageable cloud bills.

At MindRind, our machine learning engineers are experts in both paradigms. As a premium generative ai development firm (<- Focus Keyword), we help B2B enterprises analyze their specific data constraints and token volumes. Whether you need a highly optimized LangChain architecture utilizing OpenAI, or a fully air-gapped, fine-tuned Llama 3 model deployed on your own AWS infrastructure, we build it flawlessly.

Take control of your AI strategy. Contact MindRind today to discuss the optimal foundation model architecture for your enterprise.

Frequently Asked Questions

Proprietary LLMs (like OpenAI’s GPT-4) are closed systems accessed via paid APIs; you cannot see or alter the model’s code. Open-source LLMs (like Meta’s Llama 3) allow developers to download the model’s actual neural weights for free, host them on their own private servers, and deeply customize them.

Llama 3 is open-weights and free for both research and commercial use. However, Meta’s licensing agreement stipulates that if your platform has more than 700 million monthly active users, you must request a special commercial license from Meta. For 99% of B2B enterprises, it is entirely free to use.

From an enterprise data privacy standpoint, open-source models are vastly more secure. Because you host the open-source model on your own Virtual Private Cloud (VPC), your sensitive data never leaves your internal network. With GPT-4, your data must travel over the internet to OpenAI’s servers.

In general reasoning, GPT-4 is still superior. However, for highly specific enterprise tasks (like writing internal company SQL queries or analyzing specific legal documents), a smaller open-source model that has been heavily “fine-tuned” on your company’s data will often outperform a generalized model like GPT-4, while running much faster.

While the open-source software is free, Large Language Models require massive amounts of VRAM (Video RAM) to operate. To host a large model, you must rent specialized cloud GPUs (like NVIDIA A100s or H100s) from providers like AWS or Azure, which can cost thousands of dollars per month in server fees.

Vendor lock-in occurs when an enterprise builds its entire software product around the specific API structure of one provider (like Anthropic or OpenAI). If that provider raises prices, changes their model’s behavior, or suffers an outage, the enterprise is trapped and its software breaks.

A hybrid strategy uses middleware (an AI Gateway) to intelligently route prompts. Simple, data-sensitive tasks are sent to an internal, open-source model to save money and ensure privacy. Highly complex, logic-heavy tasks are routed to a proprietary model like GPT-4.

Yes. Integrating an API like GPT-4 requires standard full-stack developers. Deploying an open-source model requires specialized Machine Learning Operations (MLOps) engineers who understand tensor parallelism, CUDA memory management, and model quantization (reducing the model size to fit on GPUs).