In the hyper-competitive mobile app market, user patience is measured in milliseconds. If a standard mobile application takes more than three seconds to load, over 50% of users will abandon the task.

When you introduce Artificial Intelligence into this environment, the latency problem becomes exponentially worse. Running a photo through a deep neural network or generating a conversational text response requires massive computational power. If your mobile app freezes with a spinning loading wheel every time the user interacts with an AI feature, your churn rate will skyrocket, and your app will fail.

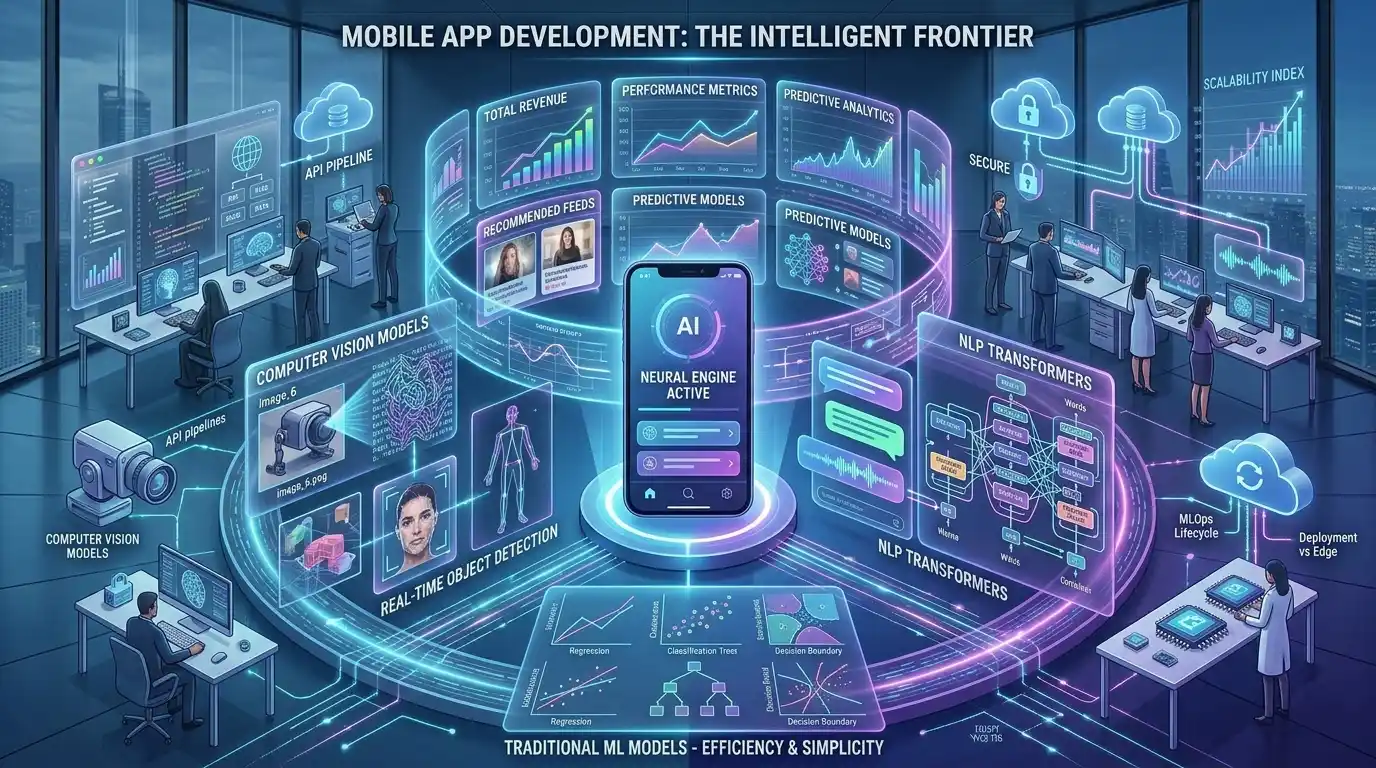

For Chief Technology Officers (CTOs) and mobile engineering teams, solving this latency problem is the most critical hurdle in machine learning app development. To overcome it, you must make a foundational architectural decision: Will your machine learning models process data on remote servers in the cloud (Cloud APIs), or will they process data directly on the user’s smartphone (Edge AI)?

In this deep-dive engineering analysis, we will compare the infrastructure, performance metrics, and hardware constraints of both approaches. Understanding this trade-off is a mandatory step when deploying AI applications to the App Store.

If your startup needs a scalable, zero-latency architecture from day one, MindRind provides elite ai application development services to help you architect the perfect balance between cloud power and edge speed.

Chapter 1: The Cloud API Architecture (The Status Quo)

The vast majority of AI-powered mobile and web applications built today rely on Cloud Inference. In this architecture, the “brain” of the AI lives on massive server farms owned by Amazon, Google, or OpenAI.

How Cloud Inference Works

When a user takes an action on their mobile phone (e.g., uploading an image of a plant to identify its species), the app does not analyze the image. Instead, it serializes the image, sends it over the internet via a REST or gRPC API to a backend server (such as AWS SageMaker), and waits. The cloud server’s powerful GPUs process the image through a massive neural network, calculate the probability, and send a JSON response back to the phone.

The Engineering Advantages of Cloud AI

- Infinite Compute Power: Cloud servers can run massive models with billions of parameters (like GPT-4). A mobile phone simply does not have the RAM to hold these massive neural weights.

- Instant Model Updates: If your data scientists improve the algorithm, they simply deploy the new model to the cloud server. Every single mobile app user instantly benefits from the upgrade without needing to download an app update from the iOS App Store.

- Smaller App Bundle Size: Because the ML model lives on the server, the actual mobile app size remains small, resulting in faster download times for the user.

The Fatal Flaw: Network Latency

The Achilles heel of Cloud AI is physics. Data must physically travel from the smartphone, through cellular towers, to a data center, and back. Even on a 5G network, this “roundtrip” introduces severe network latency. If the user is in a subway or a rural area with poor cellular coverage, the AI feature completely breaks. Understanding this limitation is vital when mapping out your overall AI app architecture.

Chapter 2: The Rise of Edge AI (On-Device Inference)

To eliminate network latency and create a truly seamless user experience, elite mobile engineering teams are shifting toward Edge Computing. “The Edge” simply means processing the data at the absolute edge of the network—right on the user’s local device.

The Hardware Revolution: NPUs and Neural Engines

Five years ago, running a complex machine learning model on a smartphone would have melted the device’s battery in minutes. Today, modern smartphone chips (like Apple’s A-Series Bionic chips or Qualcomm’s Snapdragon series) feature dedicated Neural Processing Units (NPUs) or “Neural Engines.”

These silicon chips are specifically mathematically optimized to perform matrix multiplications the exact calculations required by neural networks—at blistering speeds, without draining the battery.

Mobile ML Frameworks (CoreML and MLKit)

To deploy Edge AI, data scientists must compress their machine learning models (a process called Quantization) and convert them into mobile-friendly formats.

- For iOS: Engineers use Apple’s CoreML framework to integrate these models directly into Swift code.

- For Android / Cross-Platform: Engineers utilize Google’s TensorFlow Lite or MLKit, which provide pre-optimized APIs for tasks like object detection and text recognition.

Chapter 3: When to Use Edge AI (Zero-Latency Use Cases)

Edge AI is not just a performance optimization; for certain industries, it is the only viable architecture.

Real-Time Computer Vision (Fitness & Health)

If an app needs to analyze a live video feed at 30 frames per second, Cloud AI is impossible. You cannot send 30 high-resolution images to an AWS server every single second over a cellular network. For example, when building an AI fitness app that uses the smartphone camera to track a user’s skeletal pose and correct their squat form in real-time, the pose-estimation model must run on-device using Edge AI.

The Offline Mode Requirement

Consider an agricultural app designed to help farmers detect crop diseases by scanning plant leaves. Farms are notoriously disconnected from fast cellular networks. If the app relies on Cloud APIs, it is useless in the field. Edge AI allows the model to run 100% offline, providing critical diagnostic capabilities regardless of internet connectivity.

Chapter 4: The Ultimate Advantage of Edge AI: Data Privacy

Beyond zero latency and offline capabilities, Edge AI solves the most difficult regulatory hurdle in modern software development: Data Privacy.

When data is sent to a Cloud API, it leaves the user’s device. It travels over networks, hits a load balancer, and is temporarily stored in server RAM during inference. In highly regulated industries, this creates a massive security liability.

If you are developing a medical mobile app that analyzes pictures of a user’s skin to detect melanoma, sending those sensitive medical images to a public cloud server violates strict compliance laws like HIPAA (in the US) or GDPR (in Europe), unless you have extremely expensive BAA (Business Associate Agreement) contracts in place.

By utilizing Edge AI, the image never leaves the user’s smartphone. The model processes the data locally, generates the medical prediction, and deletes the image from temporary memory. This “Zero Data Transmission” architecture is the gold standard for AI healthcare mobile apps and secure diagnostics, drastically reducing the enterprise’s legal liability.

Chapter 5: The Limitations of Edge Computing

If Edge AI is so fast and secure, why isn’t every app using it? Because running neural networks on smartphones introduces severe hardware constraints.

The App Bundle Size Explosion

A powerful machine learning model can easily be 500 MB to 2 GB in size. If you package that model directly into your iOS or Android app bundle, users will refuse to download it. App Store limits often force users onto Wi-Fi for massive downloads, completely destroying your app’s user acquisition funnel.

Engineering Solution: Developers must use Model Quantization to compress the weights from 32-bit floats to 8-bit integers, shrinking the model size while trying to preserve accuracy. Alternatively, the app can download the model asynchronously in the background after the initial App Store installation.

Device Fragmentation

While the iPhone 15 Pro Max can run complex LLMs with ease, a budget Android device from 2019 will struggle, overheat, and crash. If your startup relies entirely on Edge AI, you are effectively cutting off millions of potential users who cannot afford flagship smartphones.

Chapter 6: The 2026 Standard: Hybrid AI Architecture

The most successful B2B and B2C mobile applications in 2026 do not choose just one path. They utilize a Hybrid AI Architecture that dynamically routes tasks based on computational complexity and network availability.

How Hybrid AI Routing Works

Imagine a smart journaling app that uses AI to analyze a user’s mood.

- The Edge Task: When the user is typing, the app uses a tiny, local Edge AI model (via CoreML) to perform real-time sentiment analysis, highlighting negative words instantly with zero latency.

- The Cloud Task: When the user finishes the entry, they click “Generate Weekly Psychiatric Summary.” This requires massive reasoning capabilities. The app detects that the user is on a strong Wi-Fi connection and sends the text payload to a massive, secure LLM hosted on an AWS Cloud API to generate the deep analysis.

This hybrid approach guarantees that the core app experience is always blazing fast, while still giving the user access to state-of-the-art computational power when heavy lifting is required.

Architect Your Mobile AI Infrastructure with MindRind

Deciding where your machine learning models should live is not just an engineering decision; it dictates your app’s user retention, data security compliance, and ongoing cloud server costs.

You do not have to navigate these complex architectural trade-offs alone. At MindRind, our elite mobile engineers and data scientists specialize in machine learning app development. We are experts in both massive Cloud Inference deployments and hyper-optimized Edge AI integrations.

Whether you need a HIPAA-compliant AWS architecture or a blazing-fast CoreML model deployed directly to the App Store, we build the infrastructure that makes your app unstoppable.

Don’t let latency kill your mobile app. Contact MindRind today to architect a zero-latency machine learning application.

Frequently Asked Questions

Cloud AI processes machine learning models on remote data centers (servers) accessed via the internet, offering massive compute power but introducing network latency. Edge AI processes the models locally, directly on the user’s mobile device (smartphone or tablet), resulting in zero latency and offline capabilities.

If your app uses Cloud APIs, the latency is caused by network transmission. The app must package the data, send it over a cellular network to a cloud server, wait for the server’s GPU to process the neural network, and wait for the response to travel back to the phone.

CoreML is Apple’s native machine learning framework that allows developers to run compressed AI models directly on iOS devices. TensorFlow Lite is Google’s equivalent framework, heavily used in Android development. Both frameworks are essential for building Edge AI applications.

Yes, but only if it is built using an Edge AI architecture. By packaging the machine learning model directly into the app bundle (or downloading it locally post-install), the app can process data, images, and text entirely offline.

Edge AI is the ultimate privacy architecture because it operates on “Zero Data Transmission.” The user’s sensitive data (like medical photos or private texts) never leaves their smartphone. It is analyzed locally by the on-device model, ensuring strict compliance with laws like HIPAA and GDPR.

It used to, but modern smartphones are equipped with Neural Processing Units (NPUs) or “Neural Engines.” These specialized silicon chips are mathematically optimized to execute machine learning matrices extremely efficiently, preventing severe battery drain and device overheating.

Edge AI has strict hardware limitations. Massive models (like GPT-4 with billions of parameters) simply cannot fit into a smartphone’s RAM. Furthermore, placing large models in your app bundle makes the download size huge, which deters users from downloading your app from the App Store.

A Hybrid AI Architecture intelligently divides the workload. It uses small, fast Edge AI models for real-time tasks (like live camera filters or instant sentiment analysis) to ensure zero latency, and routes complex, logic-heavy tasks to powerful Cloud APIs when an internet connection is available.