For a Chief Technology Officer (CTO) or VP of Engineering, the greatest bottleneck to enterprise growth is the speed at which the engineering team can ship secure, bug-free code. Technical debt accumulates, code reviews pile up in pull requests, and developers spend 40% of their time writing boilerplate tests rather than architecting core business logic.

In 2026, the solution to this bottleneck is no longer just “hiring more developers.” The solution is augmenting your existing team with generative ai for software development.

We have moved far beyond simple auto-complete tools. Today, elite engineering teams are deploying autonomous AI agents directly into their repositories and CI/CD pipelines. These agents can review code, write unit tests, refactor legacy systems, and automatically generate documentation.

In this technical deep-dive, we will explore how to architect and integrate AI coding agents to multiply your team’s output. To understand how this fits into the broader evolution of enterprise technology, read our master guide on the future of generative ai software development.

If your organization is looking to build these advanced developer tools natively, MindRind provides elite generative ai development solutions to help you architect secure, autonomous agentic workflows.

Chapter 1: The Evolution from Copilots to Autonomous Agents

To fully leverage AI in software engineering, technical leaders must understand the evolutionary leap from “Copilots” to “Autonomous Agents.”

The Reactive Era (GitHub Copilot)

Early generative AI tools for developers, such as the initial versions of GitHub Copilot or Tabnine, were strictly reactive. They functioned as highly advanced, statistically driven auto-complete engines living inside the Integrated Development Environment (IDE).

- How it works: A developer writes a comment like // fetch user data from API, and the LLM generates the corresponding JavaScript fetch function.

- The Limitation: These tools require constant human hand-holding. They lack a macro-level understanding of the entire codebase and cannot execute commands, run terminal scripts, or push code.

The Proactive Era (Autonomous AI Agents)

In 2026, enterprise software teams are building and deploying Autonomous AI Agents using frameworks like LangChain and AutoGPT. Unlike Copilots, agents are proactive and stateful.

- How it works: An agent is given a high-level goal, such as “Migrate this Python 2.7 script to Python 3.10 and write unit tests for the new functions.”

- The Execution: The agent autonomously reads the file, identifies deprecated syntax, rewrites the code, generates a test file using pytest, runs the tests in a sandboxed terminal, analyzes any error logs, fixes its own mistakes, and submits a Git Pull Request (PR) for human review.

This level of automation drastically reduces cognitive load on human engineers, allowing them to focus strictly on system architecture and complex problem-solving.

Chapter 2: Architecting an AI Software Agent

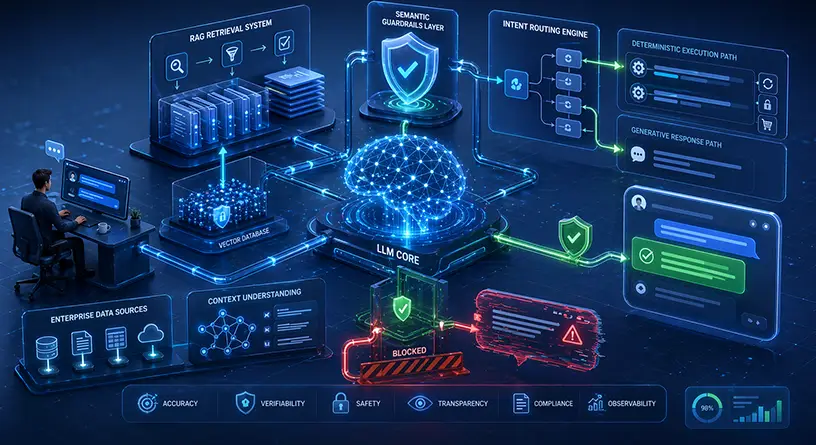

Building an AI agent that can safely interact with your proprietary codebase requires rigorous backend engineering. You cannot simply give a Large Language Model unrestricted access to your production servers.

Here is the blueprint for building a secure, internal AI software developer agent:

The LLM Brain (Reasoning Engine)

The core of the agent is a foundation model with exceptional coding capabilities (such as GPT-4o, Claude 3.5 Sonnet, or a fine-tuned version of Llama 3 for Code). The model must possess a massive context window to absorb thousands of lines of code simultaneously without losing track of syntax.

The Tool-Calling Interface

An LLM alone cannot “do” anything; it can only generate text. To make it an agent, you must bind the LLM to functional tools using orchestration frameworks. The agent needs access to:

- File System Tools: To read, write, and modify .js, .py, or .go files.

- Terminal Tools: A secure, containerized Docker environment where the AI can run commands like npm install, go build, or execute test suites.

- Git Tools: APIs that allow the AI to run git commit, create branches, and push to GitHub or GitLab.

Abstract Syntax Tree (AST) Parsing

When dealing with massive enterprise repositories, the AI cannot read every single file (it would exhaust the context window and token budget). The agent must use AST parsing and semantic search to navigate the codebase, extracting only the specific functions and dependencies relevant to the task.

To ensure this complex reasoning process does not crash under high usage, your engineering team must build a highly robust agent architecture capable of asynchronous execution and fault-tolerant tool calling.

Chapter 3: Integrating AI into the CI/CD Pipeline

The true ROI of generative AI for software development teams is unlocked when AI agents are embedded directly into your Continuous Integration and Continuous Deployment (CI/CD) pipelines.

By integrating AI into tools like GitHub Actions, Jenkins, or GitLab CI, you create an automated, intelligent quality assurance layer that operates 24/7.

Automated Code Reviews and Security Audits

Traditionally, a Lead Engineer spends hours reviewing Pull Requests (PRs). An AI agent can intercept the PR the moment it is opened.

- The agent analyzes the diff (the changes made).

- It checks the code against the company’s internal style guides.

- It performs static analysis to detect common vulnerabilities (like SQL injections, hardcoded API keys, or inefficient time complexities like O(n^2) loops).

- It automatically posts inline comments on the PR, suggesting refactors before the human Lead Engineer even looks at it.

Test Automation Generation

Writing unit and integration tests is the most neglected chore in software engineering. AI agents excel at test automation. When a developer pushes a new API endpoint, a CI-triggered AI agent can automatically read the endpoint logic and generate comprehensive test cases covering positive flows, negative flows, and edge-case exceptions.

This drastically increases the codebase’s test coverage percentage, mathematically reducing the number of bugs that reach the production environment.

Chapter 4: Crushing Technical Debt and Legacy Refactoring

Every enterprise tech company carries technical debt. Millions of lines of legacy code often written in outdated languages or frameworks sit in repositories, slowing down deployment speeds and frustrating modern developers.

Refactoring this code manually is a massive financial drain and poses a high risk of breaking the system. This is where AI agents provide the highest measurable ROI.

Automated Code Translation

Consider an enterprise banking software built on an outdated Java 8 monolithic backend. Upgrading it to a modern, microservices-based architecture using Go or Node.js would traditionally take a team of engineers over a year.

A generative AI agent can automate the bulk of this translation process. The AI can read the legacy Java code, understand the core business logic, and output the exact equivalent code in modern Go. Furthermore, it can automatically generate the necessary Dockerfiles and Kubernetes manifests required to deploy the new microservices.

Documentation Generation

Legacy codebases are notoriously undocumented. When the original developer leaves the company, the knowledge leaves with them. AI agents can be deployed to crawl through massive, undocumented repositories. Using Natural Language Processing, the agent reads the code, deduces its functionality, and automatically generates comprehensive ReadMe files, inline comments, and OpenAPI/Swagger documentation for legacy APIs.

Chapter 5: The Developer Transition (The Human Element)

Integrating autonomous AI agents into your software development lifecycle is not just a technical challenge; it is a cultural shift.

CTOs must manage the transition of their human developers from “Code Writers” to “Code Reviewers and Orchestrators.” As AI takes over the boilerplate typing, your human engineers must elevate their skills. They must become experts in prompt engineering, system architecture, and understanding the probabilistic outputs of LLMs.

If your current team is struggling to adapt to this new paradigm, or if you need engineers capable of building these complex AI agents natively, you must rethink your hiring strategy. You must know the specific developer skills needed for the AI transition .

Furthermore, if building these internal tools is taking too much time away from your core SaaS product, many enterprises are choosing to bypass the learning curve entirely. Exploring the financial benefits of hiring external generative AI developers can instantly provide your team with the AI infrastructure they need without halting your current sprint cycles.

Accelerate Your Engineering Team with MindRind

Generative AI is not here to replace elite software engineers; it is here to multiply their output. The tech companies that arm their developers with autonomous AI agents today will completely outpace their competitors in feature delivery, bug reduction, and system scalability tomorrow.

Building a secure, sandboxed AI agent that can autonomously navigate your proprietary GitHub repositories requires highly specialized MLOps and backend engineering.

At MindRind, our core expertise is generative ai for software development (<- Focus Keyword). We build and deploy bespoke autonomous coding agents, integrate AI directly into your CI/CD pipelines, and secure your IP within Virtual Private Clouds. We do the heavy ML lifting so your developers can focus on what they do best: building incredible software.

Ready to 10x your engineering team’s output? Contact MindRind today to integrate autonomous AI into your software development lifecycle.

Frequently Asked Questions

An AI Copilot (like GitHub Copilot) is reactive; it sits in the developer’s IDE and auto-completes code snippets based on the immediate context. An Autonomous AI Agent is proactive. It is given a high-level goal, and it can independently read files, write code, run terminal commands, execute tests, and submit Pull Requests without human intervention.

Yes, but security architecture is paramount. AI agents should never be run on public models without enterprise agreements. Agents must be deployed within secure, sandboxed environments (like containerized Docker instances) and utilize Enterprise API endpoints (which guarantee zero data retention) or locally hosted open-source LLMs.

Generative AI can be integrated into pipelines (like GitHub Actions or GitLab CI) via webhooks. When a developer opens a Pull Request, the CI triggers the AI agent. The AI automatically analyzes the code diff, checks for security vulnerabilities, enforces style guides, and posts a review before a human engineer merges the code.

Absolutely. Writing unit and integration tests is one of the most effective use cases for generative AI in software engineering. AI agents can analyze new functions, identify edge cases, and generate comprehensive test suites using frameworks like Jest, PyTest, or JUnit, significantly increasing code coverage.

AI models excel at code translation and comprehension. An AI agent can analyze a massive legacy codebase (e.g., outdated PHP or Java), map the core business logic, and automatically rewrite the application into modern frameworks (like React or Go) while also generating the missing documentation.

No. Generative AI replaces the tedious, boilerplate aspects of coding (typing syntax, writing basic tests). Human developers will transition from being “typists” to “architects.” They will spend their time designing system logic, orchestrating AI agents, and solving complex business problems that AI cannot comprehend.

To build an AI agent, developers use orchestration frameworks like LangChain, AutoGPT, or CrewAI. These frameworks allow the LLM to access “tools” such as file system readers, secure terminal executors, and Git APIs enabling the AI to perform actual software engineering tasks.

Traditional software engineers must adapt by learning advanced Prompt Engineering, understanding LLM context window limitations, and mastering architectural design. They must become proficient in reading and reviewing AI-generated code, quickly identifying hallucinations or subtle logical errors before deploying to production.